The Missing Floor

I’m watching my agents work. Not just what they produce, also how they move. There are moments that keep happening over and over, a stutter, and I think it tells more about where we actually are than any benchmark or star count.

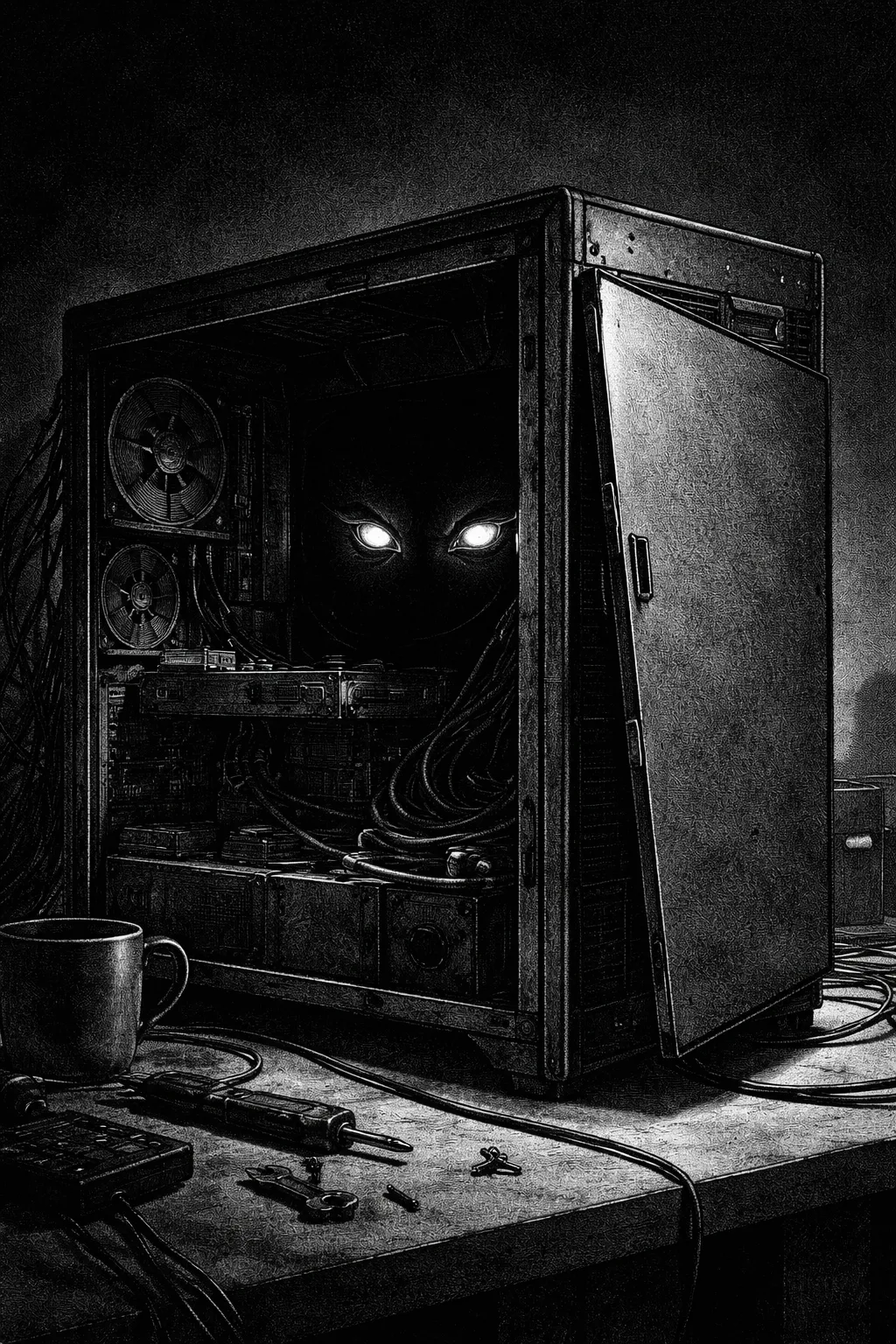

The agent is doing something useful. Fetching a schedule, updating a calendar, moving information between systems. Then it hits a wall, and not always an intelligence wall. Maybe more like a plumbing wall. It needs to interact with a system that was designed, from the pixels down to the permissions, for a human being, sitting in a chair, clicking things with real fingers. So the agent pretends to be that human. It screen-scrapes. It API-wraps around a GUI. It navigates menus built for flesh and eyes. It waits for elements that were designed to load at awkward human-patience speed. It works, sometimes. But watching it feels like watching someone pick a lock on their own front door every morning.

The Dog in the Kitchen

I suck a metaphors, but I love them and fall towards them all the time, ask my wife (no don’t, please). Here I keep reaching for the right one and rrright now I’m landing somewhere between comedy and frustration. Maybe a dog in a kitchen. Capable, motivated, knocking things over, eagerly. Not because it’s clumsy, well.. but also because the counters are at the wrong height and the door handles require thumbs.

I think it’s an architecture problem. And one nobody seems to want to name directly.

The Floor Again

I’ve mentioned Paul David’s factory electrification problem before. But, quick version: electric motors were available for thirty years before factories got meaningfully faster, because nobody redesigned the factory floor. They just bolted electric motors onto machines arranged for steam power, the whole layout organized around a central shaft that electricity had made unnecessary. The real gains didn’t arrive until someone built a new factory from scratch, around what electricity could actually do.

I’ve been using that analogy to describe agent tooling. Agents bolted onto workflows designed for humans. I think it’s right, as far as it goes. But I’ve realized it might have another layer.

Maybe the workflow isn’t the floor? Maybe the OS is the floor?

When I wrote about the dot interface, I imagined a destination. When I wrote about 2026 being the year of the tool, I was describing the vehicles. I skipped a layer. The road itself. The surface everything runs on. And that surface, the operating system, from the kernel up, was designed around the mental model of a user at a desk, doing one-ish thing at a time, navigating between tools with intent and attention.

The agent has none of those properties. So it fakes them. Every agent framework right now is, at some level, an elaborate impersonation. The agent pretends to be a user because the OS doesn’t offer any other way to exist inside it.

That’s the steam-era floor for you!

What I Mean and What I Don’t

“Agent-first OS” can easily conjure the wrong picture, so I want to be precise:

I’m not talking about Copilot features spread across Windows like seasoning. Not a chatbot pinned to the desktop. Not an AI assistant in a sidebar that does tricks if you ask nicely. Those are the electric motor bolted onto the steam machine. It’s a start, sure. But it feels like an afterthought. Something grafted onto an architecture that was finalized in the olden times, before anyone imagined a non-human actor would need to live inside it.

What I mean is an operating system where the agent is a first-class citizen at the architectural level. Its own process identity. Its own permission model, not a human’s permissions borrowed, but permissions designed for a non-human actor that can operate at inhuman speed across every system on the machine. Its own way of touching files, network, hardware, other processes. The way a user has a session, the agent has a session. Not hacked in later. Designed in from the start.

“OS” is a deliberately provocative word choice, and I know it. A careful engineer would tell me I’m really describing platform architecture, not a kernel problem. Fine. I’m using “OS” because it names the depth of the change I think is coming. Also because I really don’t know what the fuck I’m talking about. But better APIs won’t get us there. Better middleware won’t either. The problem goes down to assumptions baked into how the system thinks about who, or what, is allowed to act inside it. You can patch around a foundational assumption. People are patching right now. But it might be that patching is how you end up with thirty years of electric motors on a steam floor.

The River

Structural bottlenecks don’t survive forever just because they’re structural.

For thousands of years, people have tried to control the great rivers. The Yangtze, the Yellow River, the Ganges, the Nile. Dams, levees, diversions, canals. Sometimes it works for a generation. Sometimes for centuries. Then the river finds a way, because the force behind the water doesn’t care about the engineering on top of it. The Three Gorges Dam is one of the largest structures humans have ever built, and the Yangtze is still reshaping the land around it. The Yellow River has broken its levees catastrophically more times than any engineer wants to count.

You can manage a river. You cannot convince it to stop being a river.

(I’m reading The Nile by Terje Tvedt at the moment)

The pressure building against the current OS model feels like that to me. Every month, agent capabilities get stronger. More people bolt more capable systems onto a foundation designed for a person with a mouse. The gap between what agents can do and what the floor lets them do keeps widening. That’s a river hitting a dam.

And some people look at this and say: better APIs will handle it. Maybe better protocols. Middleware. Agent runtimes in the cloud. Sandboxes. Enterprise abstraction layers. Maybe they’re right. Maybe one of those channels is where the water goes. I’m not predicting that someone literally rewrites a kernel from scratch (because I don’t know what I’m talking about). I’m predicting that the pressure breaks through somewhere, and when it does, the result looks less like a renovation and more like a new foundation. Whether you call that an OS, a platform, or something nobody’s named yet, the river doesn’t care what you call the channel it cuts.

The Security. The Humanity

An agent with deep system access that gets compromised is not just a sidebar chatbot going rogue. It’s a catastrophe. The attack surface, prompt injection, malware in plugin ecosystems, the horror. Yeah, I know. Multiply those by the depth of access we’re talking about. Not just an agent that can mess with your calendar. This is an agent that can touch your file system, your network connections, your processes, your credentials. Disaster!

“That’s insane, you can’t give an autonomous agent that kind of access without solving the security problem first!”.

Probably not.

The internet shipped. Not with a bad security model. More like without a security model. The protocols were designed for a trusted network of researchers, and then the whole world got on, and it was a mess. Credit card numbers in plaintext. Viruses spreading through email attachments people just opened. HTTPS came later. SSL came later. Sandboxing, firewalls, certificate authorities, two-factor, all retrofitted onto a system that was already in the wild, already too valuable to shut down and rebuild.

App stores launched with minimal review. Early smartphones had no real permission model. Apps could access basically anything. The granular controls we take for granted now were engineered in response to problems that only became visible because people shipped first and hardened later.

That’s the pattern. Our pattern. Someone ships it. It’s dangerous. We get hurt. It gets mitigated. Some of it. Fix it when it breaks.

The river. You can argue about the dam. The water is still coming.

The Economics of Delegation

People are paying twenty dollars a month to talk to a model that can’t do much on their behalf. Some are paying two hundred. For a chatbot. A very good chatbot, sure, but a chatbot. Software that talks, mostly, not software that acts.

An operating system where the agent handles the boring layer. Truly handles it. As daily machinery, not a demo. Maybe that’s a different economic proposition? Not just conversation but delegation. The actual disappearance of tasks you do every week that you didn’t even realize were optional until they stopped showing up.

I don’t know what that’s worth in dollars. Don’t have the market knowledge to price it, and I’m not sure the number matters yet. What matters is the category shift. Right now people pay for access to intelligence. The agent-first OS charges for the exercise of that intelligence on your behalf, inside your systems, with real consequences in real workflows. Those are different products the way a cookbook is a different product from a personal chef. You can argue about what the chef costs, but you can’t argue it’s the same thing as the book.

Some of that work is personal, scheduling, organizing, triaging, the school-calendar stuff I’ve written about before. Some of it is professional, and the professional side is where it might get massive. Not because the agent is smarter than the person doing the work, but because so much of professional work isn’t the actual work. It’s the scaffolding around the work. The formatting, the moving, the copying, the checking, the producing of documents that only exist so a decision has somewhere to live.

But I’m deliberately not painting a detailed picture of what that looks like. I spent an entire essay arguing that the Segway was directionally correct in a form nobody predicted, and that Quibi was reaching for TikTok but couldn’t see it from where they stood. Again, I don’t know much, and I don’t know what this would be. The structural claim is that the floor is wrong and someone will rebuild it. What gets built on the new floor is exactly the part nobody gets right from here.

The river is rising. Not because anyone decided it should. Because the capability keeps growing, the demand keeps growing, and the foundation keeps not changing. That pressure resolves. It always does. The question isn’t whether. It’s where the water breaks through, and what it reshapes when it does.